Hello,

it seems that our event store is corrupted, and I have no idea how to analyze or fix it.

Just for context:

Begin of September we performed a restore of the event store when migrating from AxonServer Standalone to an AxonServer Cluster. A restore was required because we had to change the underlying disks. The restore procedure was tested multiple times beforehand, even with production backups. The migration itself looked successful, and the AxonServers and applications appeared to work fine afterward.

Unfortunately, we later discovered errors that suggest there are “holes” or corrupted data in the event store.

Observations:

“Invalid sequence number for aggregate” errors

We see a number of “Invalid sequence number for aggregate” warnings in the AxonServer logs followed by a CommandExecutionException on client side:

AxonServer:

"logger": "io.axoniq.axonserver.message.event.SequenceValidationStreamObserver",

"message": "Invalid sequence number for aggregate in context default. Received: 0, expected: 1",

Clients:

org.axonframework.commandhandling.CommandExecutionException: An exception has occurred during command execution

Caused by: java.util.NoSuchElementException: No value present

at java.base/java.util.Optional.get(Optional.java:143)

at org.axonframework.eventsourcing.EventStreamUtils.lambda$upcastAndDeserializeDomainEvents$1(EventStreamUtils.java:88)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at java.base/java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:197)

at io.axoniq.axonserver.connector.event.AggregateEventStream$1.tryAdvance(AggregateEventStream.java:75)

at java.base/java.util.stream.StreamSpliterators$WrappingSpliterator.lambda$initPartialTraversalState$0(StreamSpliterators.java:292)

...

Missing aggregates or events

We also found aggregate IDs (>1000) that exist in our projection tables but have no corresponding events in AxonServer, which is very confusing.

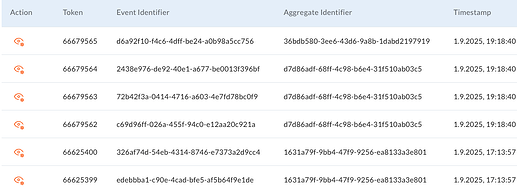

Additionally, we see aggregates in the AxonServer UI that appear incomplete. For example, an aggregate may show sequence numbers 2 to 5, but sequence numbers 0 and 1 are missing.

Since the projection tables contain data for these aggregates, we know they should exist, but they cannot be found in AxonServer. Naturally, sending commands for such aggregates results in errors.

- Is there any way to analyze the event store to understand what is going on?

- Could the index or bloom filter files be out of sync with the event files?

- Are there any tools available that could help us?

We are running AxonServer v2025.1.4, with an event store size of approximately 107 GB.

Thanks in advance for your help.

Klaus